Pontignano, Italy, August 2020

– COMPIT’20 –

Platform-of-Platforms and Symbiotic Digital Twins:

Real Life Practical Examples and a Vision for the Future

Nick Danese, NDAR, Antibes/France, nick@ndar.com

Denis Morais, SSI, Victoria/Canada, denis.morais@SSI-corporate.com

Abstract

The platform-of-platforms paradigm remains a genetic condition to start enacting the Digital Transformation by leveraging the multiple digital twins that compose “the” Digital Twin in the parallel-processing yet overall asynchronous process underlying automated data & information availability to all. This approach is virtuously inscribed in any PLM macro-process environment.

Funded projects aimed at various tool integration goals are underway, and first “open architecture”, commercially available solutions have become available in 2019. Some are based solely on existing software, others required development of “universal” digital twin connectivity (a blessing in disguise). Research in Platform-of-Platforms solutions available today will be reviewed and a vision of the near- and longer-term future presented.

1. Introduction

In fairness to those who unknowingly invented Digital Twins it is a duty to state: Computers, ergo Digital Twins. “Cogito, ergo sum” could have been tough of as a non-sequitur in the still theocratic enough days of Descartes, but that very real Digital Twins remained undefined as such for decades can be surprising at a time when quantum physics is already “old stuff”.

Leaping ahead to 2020, we can take stock of 35 years of PLM and see how little exists today in way of a truly relational/associative work environment, how complex it is to set one up and, upstream, how little people understand what it would be useful for in the first place. (One of the first recorded applications of PLM was in 1985 by American Motors Corporation who were looking for a way to speed up the product development process of the Jeep Grand Cherokee. The first step was the using CAD tools with the primary objective of increasing the productivity level of the draughtsmen – see www.concurrent-engineering.co.uk.) Having said that, hats-off to those who have tried so hard to build such environments and, to varying extents, somewhat succeeded in the face of undeniable, objective hardware, software and cultural limitations.

On the good side, the evolution of software, industry and market conditions as well as the cultural relation to computing have fed a very different IT ecosystem than that present when computers were first really put to the task some 60 years ago: the agnostic combination of data sets have spurred the advent of the finally underway platform of platforms era.

2. Platform of platforms: more than just technology

Very loosely defined, a platform is a piece of a process: a computer, a program, a person, another process, etc. In this respect, attention is still today paid almost exclusively to technology but, looking beyond the “Product” in the Product Life Cycle one finds people. People create products, consume products: people are the life of products and, hence, the distinct strands composing the DNA of all the processes associated with products. It can be said then said that people have been the major cause of the birth of the platform of platforms environment and, symbiotically, the capitalist economy and consumerism becoming the way of life were a major vector thereof.

Without being too picky on semantics, both computers and software tools are platforms, although it can be argued that different cultures, disciplines, data exploitation scenarios, etc. are platforms, too.

A simple example of the above is, simply, the computer itself: in order for a software product to exist it must run on a computer, hence the computer manufacturer provides a platform that hosts other platforms and, arguably, vice versa.

Looking at the software tool itself, it generally serves platforms extending way beyond other software: for example, while an internet page source is the same for everyone, the pop-up will be tailored to the individual that has logged on to that PC, perhaps even contextually with the contents o the web page being displayed. In this case, the advertising engine simply exploits the existence of the browser, the former existing only thanks to the existence of the latter and the latter supporting the existence of the

former.

Finally, the person looking at the screen will make a decision: buy something, move to another page, turn the PC off, etc. That action makes the person a platform, i.e. an active component of the process.

In short, although still much unrecognised today, authors and consumers have forever been one and the same: each platform supports other platforms, all platforms together create the ensuing process.

Not the topic of this paper but it is worth noting that the above is already seen in the nature of the good old subroutines present since the very early days of programming and some which working together despite being written in different languages.

On the philosophical side of technology, one could extrapolate and relate the deep meaning of the Ancient Greek meta-data and today’s AI aim at making computers think: it may be wise to heed Descartes “cogito, ergo sum”. Such relations lead to say that Digital Transformation, Platform of Platforms, Digital Twins are more than “just technology”.

3. The distributed, collaborative environment at the source of the Digital Twin

A timeless reality, the fragmentation of the design and production processes into several time and scope limited segments constitute in themselves a significant communications problem, compounded by the fact that the many of the different software tools in use today rest on unyielding proprietary data structures, many of which are metadata-poor.

3.1. The distributed environment

The environment has always been one of multiple authors, individually and separately generating all sorts of interlaced data using multiple software programs, data formats and based on multiple platforms. This leads to multiple instances of data and information which can very easily become a genetic, self-immune disease of every process, and create noise and confusion throughout the

framework of the design & production space. The unavoidable incompleteness, skew and subjectivity of each instance adds to the uncertainty, which people attempt to palliate with repeated data transmission effectively increasing confusion. Errors are the ensuing corollary of that and force rework, which itself causes a domino-cascade effect of resource reassignment and compounded losses.

The farther along the way an error occurs, the greater the damage, and all in the absence of a guarantee that all direct and indirect issues will eventually be resolved at all.

On the bright side, a distributed environment has the immense potential of being able to collect and enact all relevant contributions to a given process in virtually unlimited fashion. One such example is crowd engineering, where the final consumer is the author of the product itself. Many large consumer product companies, but also many software companies, have experimented with crowd engineering in recent years, and several commercial web platforms have been set up for this.

The on-going challenge is to coordinate the inherent fragmentation of the distributed environment by managing the platforms, their work, data and information and to make everything available, not just accessible, a job that PLM platforms attempt to carry out since 1985, Danese and Pagliuca (2019).

3.2. The collaborative environment

Another pillar of a successful process is constituted by the correct and timely use not just relevant but actually correct data, information and processes by the concerned authors. While much processing can already today be accomplished automatically by software programmed for the task, some processes or portions thereof are carried out by people. In all cases, a certain level of discernment must be applied at all levels in every process: some can be provided by AI and some must be contributed by correctly

informed people. Despite the existence of as countless as ineffective if not detrimental “checking” strategies, it remains a challenge to be correctly informed. Still too often people don’t even know whether they are informed correctly, or not, and have no means of knowing it, let alone improving their level of information.

In order to be of use, data and information have to be put at the disposal of the concerned authors in a timely fashion and in a format suitable for its consequent use. Then, where the data is, which format it is stored in, when was it stored, how was it generated, who provided it, etc. become fundamental parameters that define the usefulness of the data in question for the prescribed purpose.

That Digital Twins are created and maintained in asynchronous fashion only exacerbates the need to coordinate the team. Adaptive communications, intrinsic in the LEAN and AGILE paradigms, become paramount and a genetic component of any successful effort, first and foremost by reinjecting data and information produced by the consumption thereof – e.g. by the authors – into the process’ information stream via, inter alia, the feedback loop that is key to managing change.

Implementation of the required information process indicating whether some data is available, or decision is made, or not, is another on-going challenge to be addressed by PLM.

3.3 The Digital Twin: A collection of interconnected Unique Models

Unique Models (essentially unique data sets) compound the power and effectiveness of making data, information and goods available. A Unique Model is composed of data being made available to a given recipient and specifically so in its contents, formatting, timing, etc. Unique Models are nothing else but Digital Twins themselves, made up of pieces of other Digital Twins. This elevates the concept of a Digital Twin above the notion of data, information, author, source, etc. In fact, and

perhaps even more important:

• A true Digital Twin may even contain, for example, “equivalent” data sets from different authors required to perform similar tasks at different levels of sophistication: for example, a coarse mesh FE model for concept validation and definition of scantlings and a fine mesh FE model for subsequent local forced response vibration analysis. The two models are different,

not interchangeable, but equivalent in that they represent the same ship.

• On a more meta-data yet digital level, the true Digital Twin may include in its in the “discernment” space opposite opinions bearing on, for example, the ship’s performance required for the mission profile.

Another example of the absolute value of Digital Twins in what remains a “relative” reality is Computational Fluid Dynamics (CFD), a discipline that makes use of separate models to achieve the same goal and which sees different software and people use the same data set to arrive at very different results and conclusions.

What is relevant is therefore that the “master” Digital Twin includes all the useful data, information, metadata, etc. and that this is regrouped in coherent “subset” Digital Twins made available to the concerned authors in a timely fashion and appropriate format.

This is something that the original sequential PLM was not very strong at, but a definite possibility in the presence of today’s improved understanding of many-to-many processes and the support provided by very performant hardware and adaptive relational/associative software. The quasi-science fiction neural networks of the 1980s now live through the still infant AI applications of today’s advertising and will continually increase the ubiquity of the Digital Twin’s value. It may be argued that AI is still rich in rational algorithms and not yet very creative: for example, think of the advertising that continues to pop-up for an item that was purchased once, months ago, never before, never since.

4. The authors of the Digital Twin

For simplicity, rather than listing authors specifically and attempting to identify individual people, processes, software, etc., the authors at the source of the ship design and shipbuilding Digital Twin space are represented here as “activities” and their life-period documented within the duration of a Product Life Cycle.

It is immediately evident that the scope of application of the Digital Twin is from t=0 to scrapping and that operational and refit data will enter the picture, too. On the other hand, still today many show surprise at some activities being present during the complete life cycle, or just about, for example: Sales, Production Engineering, etc.

Interestingly and very instructive, dressing the same chart but for software, people and processes provides a very complementary vision of which of them are active when. The cross-connecting of activities, processes, software, people, etc. can go on and on in various directions and to finer and finer detail. The result is the expected, pervasive many-to-many, heavily parallel workspace.

Although somewhat “proprietary”, the software products taken as an example on this occasion illustrate well how some unsuspected ones are required and should be used at unexpected times during the life cycle of the product, exploiting the multi-author Digital Twin to the benefit of the overall process, that is the entwined financial bottom lines of shipyard and ship owner.

Of course, any and every software tools present on the market is a potential author, must be considered and its used evaluated versus its applicability and measurable contribution to the Digital Twin and its exploitation in the context of the open architecture Platform of Platforms ecosystem advocated here.

In addition to software being an author per-se – for example, let us consider the automatic re-run of a stability analysis that changes scope to include different Class criteria as a function of some non-geometric input such as mission profile, number of passengers, etc. – it will be vital that people-authors have available and know how to use the software that will allow them to contribute constructively to the Digital Twin.

This requirement could be perceived as difficult to satisfy when looking outside engineering. A telling example is that of a sales person who must be able to compose a “custom” vessel based on a “series” platform in the presence of the customer and document feasibility – is the VCG too high, or the weight in excess of the allowed maximum displacement, etc. – and price while reviewing configuration options, all without calling the office and typically finding that the whereabouts of the one person who could have answered the question are unknown.

In fact, the ubiquity not just of data but also of tools and processes, be they authors or communication engines, is probably the major driving factor of the successful Digital Twin and exploitation thereof.

The above leads to the notion that a Platform of Platforms is not, cannot and must not be an absolute notion. On the contrary, it is relative to and tailored by its purpose.

5. Digital Twins: The good, the bad and the ugly

From a functional standpoint Digital Twins are good or bad, there really is no in-between, and there is no such thing as a Good Universal Digital Twin Template in existence. It is a fact that every sizeable every Digital Twin around today is indeed incomplete, that is the ugly, and it could be argued that an impeccable but incomplete Digital Twin is possibly an acceptable not-so-ugly after all half-way. How ugly is ugly will be determined by the true level and amount of risk posed by the Digital Twin condition of incompletion: that will move the pointer towards good or bad, a very difficult situation

when that pointer is in fact a subjective evaluation.

From a Taoist, Yin-Yang perspective and making do already with what one has, it will be constructive and productive to fall back to the individual “sub” Digital Twins that make up the Digital Twin: once the goal mapped clearly, it will be better to be 100% sure of 80% of something rather than 80% sure of 100% of the same. This will be further expanded upon in a later section, but another underlying sine-qua-non condition must be satisfied for a Digital Twin to be valid in the least, that is the existence of a managed information stream.

6. Information streams: Work-In-Progress-Real-Time vs Release

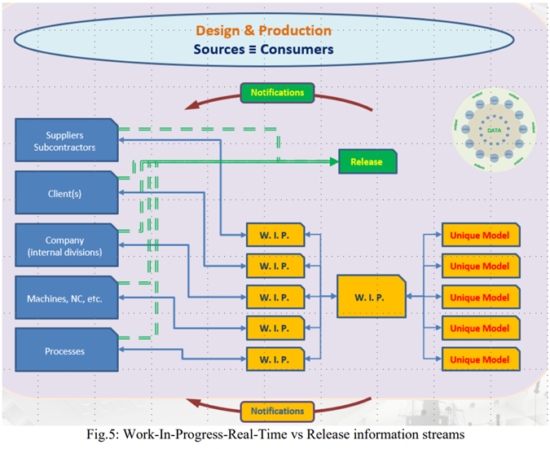

It is safe to say that there is no functional Digital Twin without some information flow, and there are at least two major information streams underlying a useful Digital Twin:

• Work-In-Progress-Real-Time

• Release

The Work-In-Progress-Real-Time information streams flows all the time and is just that: everyone feeds their respective WIP work to the Digital Twin space, concerned authors are notified, a feedback loop is provided to channel their reaction back to the Digital Twin space, etc. All those concerned see what others are doing in certain areas of the Digital Twin. The Work-In-Progress nature of the Digital Twin is instrumental in spurring notes, comments, etc. and in spawning checks, what-if studies, and the earliest possible troubleshooting. The inherent an asynchronous multi-platform Digital Twin can live, let alone thrive, only and only in the presence of an efficient Work-In-Progress-Real-Time information stream.

The Release information stream flows only when some portion of a Digital Twin becomes fixed by being “released”. For example:

• an engine has been selected: all subsequent work related to the engine must refer to the selected engine

• the structural layout has been finalized: all subsequent work related to structure must refer to and make use of that structural layout

• the GA has been validated by the ship owner: the structural engineer is thereafter not allowed to change the location of pillars

• …

Once released, that part of the Digital Twin becomes immutable and the corresponding Work-In-Progress stream is stopped.

It is not the topic of this paper to discuss the implementation of information streams, but it will be noted that even “just useable” solutions are still very hard to come by, and even less so outside the highest echelons of the industry. On other hand, some level of success can be achieved by creatively using document management systems that allow that while respecting security and intellectual property requirements. In the author’s experience there are several candidates on the market, ARAS PLM and Microsoft’s Sharepoint based TEAMS being two incumbent favourites.

7. Practical Platforms of Platforms, Digital Twins, PLM, etc.

A universal Digital Twin is utopia: even if one were to collect absolutely all the data, information, etc. specific to shipbuilding, each company will implement different processes and follow different decision making strategies as a function of its specific requirements and constraints the scope of research leading to the present paper was intentionally focused towards the parts of a Digital Twin easiest to implement by any company and leading to the shortest-term, highest possible ROI within

any generic, every-day context, that is, practical.

There are many seminal components of any given Digital Twin, and some have been mentioned already. One often unrecognised yet vital component of a good Digital Twin deserves a special mention: the ability to handle well change management. While information streams are crucial to convey the need for changes, the changes themselves, the results of changes and their consequences, the authors must be able to document changes in the first place, this consideration referring mostly to software and to the ability to identify parts of its own Digital Twin as a “change”.

Otherwise, seminal components of Digital Twins include:

• People, Vision and Added Value

• Technology

• Software, Platforms and Tools

• Unique Models / Digital Twins

• Culture

• …

This paper will focus on technology, software platforms and tools, considering that Unique Models Digital Twins are an achievable “given” within the company x context being considered and knowing that change management is already handled effectively in at least one of the out-of-the-box tool sets selected for the research initiative: The SSI DigitalHub and the SSI ShipbuildingPLM environments.

7.1. Practical

There are several daunting aspects in considering the overall concept of the Digital Twin and its effective use:

• the sheer amount of data

• the tens, hundreds and thousands of people involved

• the IT infrastructure required

• the difficulty in defining processes

• the inherent complexity of processes

• identifying which author will contribute what

• identifying the relative importance / gravity of an action or of its result

• the ever-changing requirements governing the above

• security

• the human handbrakes: fear of change, adversity to responsibility, etc.

• …

Something is practical when it can be easily and effectively used by those involved to achieve the pursued goal. Now, while certainly not a substitute for a correct PLM implementation, the ensemble of many commonly available and already in use software tools can be federated into a Platform of Platforms, to build a not-so-ugly Digital Twin and exploited in PLM-style to generate ROI

immediately, by virtually anyone.

In this respect, one crucial very early task when designing a Digital Twin is to establish the desired results, benefits and target ROI zones. If a Digital Twin cannot exist in a practical manner, no ROI will be generated, it will quickly become very ugly and, eventually, bad. On the contrary, losses will very likely ensue.

7.2. People, Vision and Added Value

Very directly related to “practical”, people are too often the minuscule grain of sand that blocks the entire mechanism. Arguably, little has changed in data and information sharing techniques, processes and strategies in the marine industry since mainstream CAD and spreadsheets became available on the first PCs in the early-1980s, followed by user-friendlier databases in the early 1990s. AutoCAD® (by Autodesk®, USA), Microsoft® Excel® and Access® continue to be used and at least the former two

remain the de-facto ubiquitous backbone of most companies. People have changed little when it comes to making good use of the Digital Twin begging them to from PC screens.

Vision is probably the most sorely missed driver of all industrial evolutions. The Kodak Moment becoming everyone else’s with the advent of the digital camera is probably the most recent and resounding consequent disaster. (The Kodak moment was Kodak’s commercial banner “the sentimental or charming moment worthy of capturing in a photograph” that became the epitomic

moment when executives fail to realize how consumers are changing and how markets will ultimately evolve in new directions without them.) The lack of investment in implementing and exploiting existing tools to generate overall company ROI is not the sole area where short-sightedness is the cause of false economies. For example, man hours are often counted as such, e.g. a straight cost, as opposed to being valued and assigned as a function of their potential to generate added value. Current practice still includes reliance on:

• (subjective) human communications

• assumption that messages, duties, tasks, deliverables, etc. are evident, clear and known to everyone

• cost minimization takes precedence on ROI-producing choices

The lack of, if not the absence of a managed data & information sharing policy and strategy is accompanied by an under-exploitation of tools already in common use. Integration, cooperation, sharing, ERP and PLM are as commonly talked about and referred to as they are not implemented effectively, or at all. Added value at the company level is vital, that is how much was spent

unnecessarily vs the sold-at price, an often-ignored metric. And, the potentially misleading measure of perceived “margin” at the microscopic level, typically the number of hours taken to produce something, takes precedence over measuring the impact of rework at company level, leading to potentially catastrophic false economies. Immediate opportunities to generate added value are the reduction, if not elimination, of errors, multiple instances of data and information and confusion: that is the mission of the Digital Twin.

7.3. Software, Platforms and Tools

Perhaps ironically, AutoCAD v.1 was a full-fledged 3D CAD program in 1982, Excel always offered multi-dimensional matrices and weakly relational environment, Access was released in 1992 as a fully relational database system and all include interfacing and integrating capabilities since the mid-1990s.

Another current world-wide de-facto standard is Rhinoceros3D®, by McNeel & Associates, better known as Rhino3D®. Its 1997 100000 users adopted Rhino3D’s beta version within one year: it filled a technical void with user-friendly surface modelling. Victims of their own ease-of-use, these and other common software are used by virtually everyone to satisfy a limited, immediate need but are exploited only marginally and at a constantly diminishing fraction of their growing potential. This represents substantial company underperformance, and a considerable missed revenue opportunity and a weakening of the Digital Twin.

Today, many programs in addition to those mentioned here read and write a variety of proprietary data formats, but sharing meta-data remains another story altogether. Shared environments like DropBox®, OneDrive®, etc. are present on pretty much every PC and smart phone but used as mere repositories rather than the dynamic sharing platforms they are. This is another example of platforms which exist and are used separately rather than being harmonized to build a however small, good Digital Twin.

Connecting back to practical and recalling that communications are a sine-qua-non criterion and requirement, some software categories are defined accordingly:

• general purpose

• Read/Write different native formats

• multi-discipline

• integrated: run inside another software

• interfaced: shares data &information directly or via a native format with no degradation

• directly compliant: direct connection to Big Data, Digital Twin, AR, VR, IIIoT&S (Intelligent Industrial Internet of Things & Services)

Additionally, connected programs are considered only if they contribute exceptionally to the overall process despite their connectivity limitations, and can be categorized as:

• share data & information indirectly via a file format transformation/conversion, degradation is not ruled out

Other discriminating aspects were selected to include:

• size and industry-wide distribution of current user base

• options provided by the tool to communicate with all consumers: people, machines, other software, processes, etc.

• scope of application: design, production, both

• current and projected potential to serve the Integrated, Collaborative, Multi-Authoring, Managed Environment

A further level of categorizing is that of “purpose”, an admittedly anachronistic approach given the more recent evolutions in just about all software but nonetheless informative enough to justify being carried out. Some “purposes” could be:

• CAD

• Data & Information “management”

• file & document management systems

• rising technology: VR, AR, Big Data, Digital Twin, IIIoT&S

7.4. Out-of-the-box

The first goal of this research was the construction of an out-of-the-box Integrated, Collaborative, Multi-Authoring, Managed Environment made of tools that fulfil as many of the criteria listed here as possible, specifically being immediately available and productive for company x. The AutoCAD and Rhinoceros platforms where selected because they do so and additionally offer a number of unique business values, including:

• compatibility at the native data format level with each other and several other general purpose and specialist software

• easy to use, short time to proficiency

• significant untapped productivity potential

• several mainstream plug-ins and companion software based on these native formats and making use of the environment of both platforms

It is remarked that the sheer number of plausible candidate software products available in addition to the those selected for the study lend additional legitimacy to this study and its findings, Danese and Dardel (2019).

8. A Paradigm Shift

The fundamental requirements, multiple platforms, rising technologies, varied formats specific to the candidate software components and the diversity of authors in nature, scope and purpose suggest a new approach to data & information use, storage and management, not dissimilar from the internet and processed Big Data models.

The functional paradigm that emerges from this study identifies a “space” in which multiple authors evolve in a collectively non-linear, individually linear, asynchronous, multi-relational, multi-platform, multi-format, collaborative fashion is that of the Digital Twin. (Somewhat ahead to this paper’s conclusion, it will be noted that SSI’s EnterprisePlatform presents autonomous ubiquity of service and document handling automation characteristics which allow a vast data & information set extending far beyond what would be manageable with everyday manual procedures. The project space’s Digital Twin then grows to include crucial documents hitherto never considered, making EnterprisePlatform one of the few sine-qua-non parts of every performant Platform of Platforms.) The “space” collects a variety of data & information in different, heterogeneous formats:

• text files

• proprietary format files (3dm, dwg, etc.)

• xml files

• databases

• images, renderings, etc.

• Augmented and Virtual Reality

• …

9. Practical Example

The number of practical examples multiplied over the course of the work, also due to on-going advances in some of the software selected for the R&D initiative. One of the first, and in many ways least impressive examples is illustrated here, its merits being relatively simple and near reaching. Not surprisingly, it was found in previous occasions that the full scope of the findings represents a challenge for those not open to considering, let alone accepting the value how much can be done with so little or those intimidated by comparing the scope of what is so very simply possible and immediately within grasp and their current practice. The more rewarding examples will be the subject of future reports on the present R&D initiative.

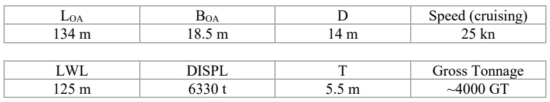

The example at hand consists in creating and maintaining a simple Digital Twin to serve some of the customary initial design activities, the subject being a 134 m Loa motor yacht concept, extended to the advocated (virtual) production. The work was commissioned by a leading shipyard for a commercial customer and it will be referred to here as M/Y Digital Twin (“MYDT”).

In the interest of consistency a few “qualifiers” are in order at this point:

• there is no convenient way to describe a non-linear collective process, therefore the author-to-author linear relations are presented in discrete format, grouped loosely in accordance with the somewhat overlapping software categorization by “purpose”

• referring to the “open architecture” mission of this R&D initiative, the reader is invited to replace the tools named here with her/his own if these were to be more productive.

• only few author-to-author relationship and interaction can be described here. The reader is therefore invited to construe a little and implicitly consider the shared, collaborative space.

• similarly, a sequential representation of a non-linear process inevitably violates some priorities and sequences of events, the reader should abstract from the formality of “lists” and construe the integrated, concurrent design and production space.

• crucial to the environment’s high-performance, most of the tools considered support scripting and/or are automatable.

• unless explicitly noted otherwise, native file formats are used.

Moreover, a bland distinction is made between the overlapping phases of:

• design: from concept to design-for-production reviews, classification drawings and adjustments during production and warranty service, etc.

• production: starting with feasibility, early BOMs, weld estimations, what-if studies, design-for-production reviews, etc. through delivery and warranty, e

10. M/Y Digital Twin

MYDT’s principal characteristics are derived from initial GA plans, owner’s requirements and initial Naval Architecture calculations:

Only the hull was addressed at this stage, it being considered that aside from its Weight and CG (that were estimated anyhow using ShipWeight®) the explicit modelling of the superstructure would have contributed little to drawing conclusions in this very first phase of the research. Of course, explicit modelling of the superstructure would allow air flow analysis using the same tool set employed in the CFD study, that was Orca3D CFD, production planning estimations, etc. The CFD engine used to analyse the Rhino3D surface models faired with the Orca3D plug-in was SimericsMP (marine).

10.1. The Workspace

The common data set shared via the Digital Twin was exploited by several concurrent users, their actions and interactions based on the native Rhino3D and AutoCAD 3D data formats. Concurrent work is represented by a workspace rather than by a workflow, but using a more legacy approach a “list” of tasks / roles and actions will nonetheless be used to illustrate the workspace.

10.1.1. Tasks/Roles

Tasks and Roles are inherently one, and only software tasks/roles are reported here. A more complete report would also include those of processes and people. Tasks/roles included:

• ShipWeight: parametric weight and CG estimation, produce a full report for Naval Architect, Project Manager, etc., summaries in custom xml/text format for other authors.

• Cost Fact plus ShipWeight, ShipConstructor, ERP, etc.: parametric cost estimation, metadata merged with other authors’

• Rhino3D, Orca3D, Orca3D CFD plus V-Ray, Bongo, Penguin, Flamingo, Brazil etc.: create fair surface model to be the parametric base for other authors. Model compartments for stability calculations. Run early CFD experiments. Produce renderings, animations of doors, deck equipment, toys, etc.

• AutoCAD plus ShipConstructor, Revit, Inventor, AdvanceSteel, EnterprisePlatform, VRay, 3DS Studio Max, etc.: read Rhino + ExpressMarine model, compose 3D GA, automatically produce the corresponding 2D drawings, BOMs, discipline 2D/3D drawings, study/review/management 3D models (full and partial). Produce renderings.

• EnterprisePlatform plus Project, Navisworks: create and automatically maintain the project space’s complete archive in accordance with planning. Use EP Operations to interact with other scriptable software, handle files generated by other authors (CAD, ERPs, DMSs, PLMs, MS-Excel, etc.) Merge with planning. Produce Unique Models

10.1.2. Actions

Only software actions are reported here. A more complete report would also include those of processes and people. Actions included:

• Project plus Navisworks, ShipConstructor, other authors: merged, interactive planning with aligned geometry and meta-data.

• Rhino3D plus ExpressMarine3D: parametrically develop first model of non-dimensional primary and secondary structure based on hull shape and GA.

• AutoFEM: read ExpressMarine model, evaluate local/medium size structural arrangements.

• GHS: read Rhino hull model (with/without compartments), read ShipWeight weight curves, define loading conditions, compute damaged and probabilistic stability, longitudinal bending moment, run seakeeping. Produce report for Naval Architect, Project Manager, text/xml summaries for other authors.

• NavCad and PropElements: read the Rhino model (stl format), compute resistance, wave drag, propulsion train (engine, power, propeller), converge design space extents for CFD runs. Refine propeller sized in NavCad.

• MAESTRO: compose global structure model, first principles scantling calculations, read ShipWeight’s weight curve + GHS’s loading conditions, compute time & frequency domain ship motions, full fatigue screening, early optimisation for production. Read Rhino model (iges). Read ExpressMarine model, apply scantlings for first structural estimation.

• Navisworks: collect 2D drawings, Rhino3D, AutoCAD, GHS, MAESTRO, ShipConstructor, ShipWeight rich 3D models for review, comparison, project management. Produce Unique Models.

• Several authors: seed the VR, AR, Digital Twin, IIIoT&S environments.

• Data is tracked and monitored by the presence / absence / changes of files identified in a user-defined, editable event list and by tracking data and changes to it within xml files. Data will change during the occurrence of the various events, author(s) are notified by MSTEAMS®, etc.

• data & information are shared outside the company using any suitable shared environment, WAN, VPN, Cloud, etc. such as DropBox, OneDrive, FTP, MS-TEAMS, etc. Email, USB sticks and similar unmanaged data transmission means are formally proscribed.

As fundamental Naval Architecture parameters converge, some authors are called upon more than

others, for example:

• AutoCAD plus ShipConstructor, EnterprisePlatform, Cost Fact: read the ExpressMarine model, create a formal structure model, advance main distributed systems model (pipe, HVAC, electrical), compose general reference model, produce Class drawings and advanced documentation for detailed cost analysis, produce renderings, VR, AR models (used with mock-ups).

• AutoCAD plus ShipConstructor, Revit, Inventor, V-Ray, 3DS Studio Max, etc.: advance 3D GA, produce 2D plans, renderings, feed the VR, AR, Digital Twin platforms.

• MS-TEAMS, other shared environment software: maintain data & information alignment amongst all authors.

This leads to further detailing the weight model and advancing final structural, stability and propulsion evaluations:

• ShipWeight: acquire advanced part lists with weights and CGs, continue comparison between weight budgets and weight of tracked items.

• AutoFEM, GHS, MAESTRO, NavCad, etc.: carry out advanced Naval Architecture checks,

final life-cycle performance estimates.

• CostFact: increase detail level of cost prediction and analysis, accuracy of monitoring.

• EnteprisePlatform: compose initial work packages for production.

At some point during the events mentioned above, production engineering and production will have

commenced:

• AutoCAD, Rhino3D, Cost Fact, Project, etc. plus ShipConstructor, ExpressMarine, AutoFEM, EnterprisePlatform, Navisworks: produce detailed design model and drawings, production engineering data and documents, planning, work packages, KPI trackers, specific documents to support individual departments and disciplines, etc.

• AutoCAD, Rhino plus 3DS StudioMax, V-Ray, Brazil, Navisworks: rendering, VR, AR.

• AutoCAD, MIM plus ShipConstructor, Navisworks, EnterprisePlatform: Digital Twin, operational PLM model of the vessel.

11. Platform of Platforms and Symbiotic Digital Twins

Despite its intentionally simplified scope, the R&D initiative unveiled the considerable extents of its practical reach, available here and now to company x. This is clearly revealed by the completion of every single further action carried out in the workspace. Key guidelines are found in the AGILE and LEAN definition of scheduled internal deliverables needed to track and manage the project’s progress and identify situations requiring decisions and of ROI generating external deliverable (of which drawings remain a component, yet more and more minor).

Another important note to make is that despite the significant data & information handling and management abilities of some software, the out-of-the-box environment includes some unavoidably unmanaged components that require ad-hoc definition, instruction and supervision.

12. Vision of the future

Companies with vision can safely table significant expectations as long as the human factor is taken into account realistically. Technology, software, understanding of processes, etc. progress faster than they can be tested, reviewed and analysed for a rational implementation thereof. This must not be perceived as a hamper or as a cause of a self-damaging “always being behind”. On the contrary, the definition of Platform of Platforms and very nature of the symbiotic Digital Twin lend themselves to being easily fertilized by new, future seminal authors. It is the responsibility of the Digital Twin designers to plan for organic growth of the Digital Twin under AGILE and LEAN guidelines.

Progressive, ROI-driven evolution, not revolution, benefits from transition which in turn requires temporarily co-existing, possibly even overlapping “equivalent” data sets, processes, etc. Naturally, new tools and processes will favour different parts, portions and aspects of the symbiotic Digital Twin but this does not necessarily render obsolete the one fallen in disuse (think about a life-time, non-evolutive Digital Twin as that of in-service nuclear power plants).

The vision of positive, progressive future therefore rests on people having a vision that will define, design, maintain, evolve and implement Digital Twins, exploit them wisely and with clear purpose, taking full advantage of technological, software, process, market, industry and the wealth of alive and healthy, yet disregarded evolutions around us, everywhere and always. Then, it will be said Vision, ergo Digital Transformation.

13. Conclusion

The symbiotic Digital Twin is a reality that is enhanced and improves every day. One just needs to look away from the false safety of legacy and accept the reality of the cultural, liberating paradigm shift that will immediately enhance a company’s quality, productivity and ROI.

In the near future it can so easily be common software, so much of it already in use in just about every office and shipyard around the world that will contribute so well to today’s and tomorrow’s good Digital Twin and launch a true Digital Transformation towards a good Platform of Platforms.

In the longer term, large, high-end systems will be the backbone of the evolving, a good Platform of Platforms and cease being the monolithic solutions they are purported to be today. To no longer be a monolithic do-everything platform and to become a part of the Platform of Platforms ecosystem will significantly help manage the symbiotic Digital Twin. It will also progressively eliminate the human factor overhead generated by the all-powerful yet impractical and elusive potential of large monolithic

systems by creating ideal yet unrealistic expectations, let alone goals.

Tomorrow there will be tiny and very large Platforms of Platforms side by side and interacting. The unimaginably large Platforms of Platforms of tomorrow is already being built today, one Platform at the time, by people with vision.

The small feeding the big in LEAN and AGILE fashion and driven by the realistic and pragmatic visionary human is the ultimate answer of all industries, marine included.

Acknowledgements

Of the very many that over time have contributed knowingly and unknowingly to my work and personal evolution, I will name two who exemplify the immense scope of help I was blessed with: Arkadiy Zagorskiy, central pillar in troubleshooting the implementation of the sometimes crazy processes I cook up on an almost daily basis and Denis Morais, intellectual sparring partner and seminal source of so much of my work. I am here today thanks to you all, too.

References

DANESE, N.; PAGLIUCA, P. (2019), Available vs Accessible data and information: The strategic role of adaptive communication in the Naval Architecture and Marine Engineering processes, CNM, Naples

DANESE, N.; DARDEL, S. (2019), Out-Of-The-Box Integrated, Collaborative, Multi-Authoring, Managed Environment for the Design and Construction of Large Yachts, Design & Construction of Super & Mega Yachts, Genova